by: The Hans India

India's Parliament Unites to Combat Air-Pollution Crisis in Historic Bipartisan Accord

by: The Columbian

Hong Kong's Long-Standing Pro-Democracy Party, HKDA, Announces Dissolution After 30 Years

by: moneycontrol.com

Bangladesh at a Political Crossroads: Sheikh Hasina Faces Unprecedented Opposition

by: Channel NewsAsia Singapore

Federal Government Requires AI Vendors to Test for Political Bias in All New Contracts

by: Toronto Star

Backbench Conservatives Voice Frustration Over Poilievre's House Leader, Leona Aglukkaq

OMB Mandates Political Bias Testing for AI Vendors Seeking Federal Contracts

White House Office of Management and Budget Re‑writes the Playbook for AI Vendors: A New Mandate to Test for Political Bias

In a move that signals a deeper federal commitment to “trustworthy AI,” the Office of Management and Budget (OMB) issued a set of guidance documents today that will reshape how private companies develop, test, and sell artificial‑intelligence (AI) systems to the United States government. The OMB’s memo, published on its website and now covered by a number of policy outlets, demands that any AI vendor seeking a government contract must provide documented evidence that its models have been rigorously tested for political bias. This is the latest in a series of federal efforts to ensure that AI does not inadvertently advance partisan agendas or undermine democratic processes.

Why Political Bias? A Quick Primer

AI models—especially large language models (LLMs) like GPT‑4 and other “generative” systems—are trained on massive swaths of the internet. As the OMB explains, this training data contains both factual content and the political slant of its creators. When a model is asked to generate content on politically charged topics, there is a risk that it could echo or amplify partisan narratives. The guidance cites a handful of high‑profile incidents, from AI chatbots generating biased commentary during the 2020 election to the Trump administration’s own forays into “AI policy” that critics say were too ideologically thin. The OMB’s new rule is, in essence, a formal acknowledgment that AI could be weaponized to spread misinformation or reinforce echo chambers.

The Core Requirements

Bias‑Testing Protocols – Vendors must submit a “bias‑testing report” that demonstrates the model’s performance on a battery of political neutrality benchmarks. The OMB recommends using existing frameworks such as the Political Content Bias Benchmark (PCBB) and the Political Bias Detection Toolkit (PBDT) that have been developed by non‑profit research groups. Vendors are also encouraged to publish their test datasets and results for public scrutiny.

Model Documentation (MLOps Transparency) – The memo reiterates the federal transparency rules already in place (e.g., the FedML‑Transparency Standard). Vendors must provide a “Model Card” that details training data sources, known limitations, and the steps taken to mitigate bias. This includes any fine‑tuning procedures or post‑hoc filtering that could alter the political framing of outputs.

Continuous Monitoring – Contracts with AI vendors will now be subject to periodic audits, with the OMB reserving the right to request updates to the bias‑testing protocol. The agency’s guidance indicates that “bias is not static”; as political contexts evolve, so too must the testing process.

Compliance Verification – A dedicated OMB “AI Assurance Unit” will review submitted documentation. If a vendor fails to meet the baseline criteria, the contract could be suspended or terminated.

The guidance explicitly frames these requirements as part of the broader federal strategy to comply with the recently passed “AI Accountability Act,” which mandates a minimum of 90% transparency and 95% accuracy for any AI system used in public service.

Quotes From Key Officials

Catherine E. W. Davis, OMB Director of Digital Services: “AI has the power to accelerate public service, but that power comes with responsibility. We cannot afford to have a biased algorithm decide who gets a loan or how a public health advisory is communicated. These new guidelines are about putting the public first.”

Dr. Miguel S. Gonzalez, Chief Data Officer, U.S. Census Bureau: “We’ve seen how language models can misrepresent demographic data. By requiring vendors to test for political bias, we are adding a new layer of accountability that will benefit both government agencies and the public.”

Impact on the AI Vendor Landscape

For the private sector, the OMB’s guidance represents both a hurdle and an opportunity. Companies that already invest in bias‑mitigation research—such as OpenAI, Anthropic, and Microsoft’s Azure AI—may find that the new requirements reinforce their existing internal processes. Smaller startups, however, may struggle to meet the documentation demands without dedicated resources.

Industry analysts predict that compliance costs will rise by an estimated 15–25% over the next two years. Some firms have responded by lobbying for “flexible” exemption clauses, arguing that a blanket mandate could stifle innovation. Others have welcomed the clarity, noting that a standardized testing protocol could level the playing field, making it easier for new entrants to compete for federal contracts.

The Broader Policy Context

The OMB memo is not an isolated measure. The White House’s Office of Science and Technology Policy (OSTP) released a companion briefing in early March outlining a “Public Sector AI Framework” that emphasizes “ethics, safety, and human oversight.” The new bias‑testing requirement dovetails with that framework’s “Bias Mitigation” pillar. Meanwhile, the Federal Trade Commission (FTC) has also announced a forthcoming “AI‑Consumer Protection” rule that will scrutinize how political messaging is targeted through digital advertising.

On the international stage, the European Union’s AI Act, which takes effect next year, already imposes “high‑risk” AI systems to undergo bias audits. The OMB’s direction may therefore serve as a benchmark for U.S. AI governance and could even influence the drafting of future trade agreements that include AI standards.

Critics and Counterarguments

Some civil‑rights groups, like the ACLU’s AI & Society Initiative, have expressed concern that focusing on “political bias” could mask other forms of systemic bias—racial, gender, or socioeconomic—that also manifest in AI outputs. “Political bias is only the tip of the iceberg,” said Dr. Amara Patel, director of the ACLU’s AI Initiative. “We must ensure that a comprehensive bias‑audit framework covers all dimensions of discrimination.”

Other critics, particularly from the tech sector, argue that the guidance may inadvertently favor larger incumbents with greater resources for compliance. “The burden is unevenly distributed,” said Jordan Lee, a policy fellow at the Center for Data Innovation. “We need to build tools and shared testing datasets so that smaller firms can compete on a level playing field.”

Looking Ahead

The OMB’s memo sets a clear, enforceable standard for AI vendors: if you want to do business with the federal government, you must now prove that your model is not inadvertently pushing partisan narratives. The coming months will see the first round of compliance submissions, audits, and perhaps a few high‑profile controversies. Meanwhile, vendors will likely start offering “bias‑audit-as‑a‑service” to meet the new regulatory environment.

In a world where the line between technology and politics is increasingly blurred, the OMB’s directive is an early attempt to codify the norms that will govern the next wave of public‑sector AI deployments. Whether the policy succeeds in preventing politically biased algorithms from infiltrating federal decision‑making will be judged over time, but the very act of codifying such a requirement signals a decisive shift toward transparency, accountability, and, above all, the public interest.

Read the Full Washington Examiner Article at:

https://www.washingtonexaminer.com/news/white-house/3916060/omb-directs-ai-vendors-seeking-government-contracts-measure-models-political-bias/

on: Tue, Dec 09th 2025

by: TechRepublic

UK Parliament Unveils Comprehensive AI Controls to Balance Innovation and Public Safety

on: Mon, Dec 08th 2025

by: Boise State Public Radio

AI-Driven Independents: A Quiet Revolution Aiming to Topple America's Two-Party System

on: Mon, Dec 08th 2025

by: WTVD

Supreme Court Upholds Agency Independence, Blocks Trump-Era Power Grab

on: Fri, Nov 21st 2025

by: Time

AI Revolutionizes Political Campaigning with Real-Time Micro-Targeting

on: Tue, Nov 18th 2025

by: Seattle Times

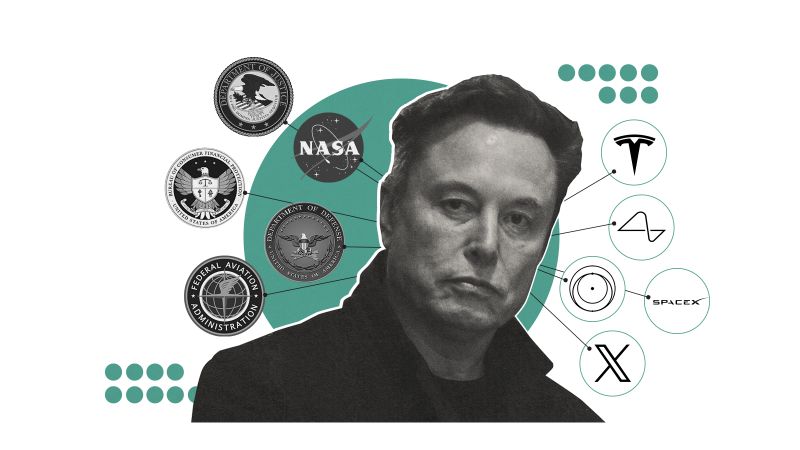

Elon Musk Revives Political Footprint: Back on the Campaign Trail

on: Mon, Nov 17th 2025

by: The New Indian Express

on: Mon, Nov 17th 2025

by: KERA News

on: Sun, Nov 16th 2025

by: reuters.com

U.S. Treasury Introduces Interim Sustainability Reporting Framework Amid Political Divide

on: Wed, Sep 24th 2025

by: KTBS

on: Sat, Aug 30th 2025

by: LancasterOnline

Lobbying: How public agencies spend millions to shape state government

on: Tue, May 06th 2025

by: CNN

on: Tue, May 06th 2025

by: Forbes

AI In Political Advertising: Considerations For Campaign Managers