Oklahoma Attorney General Blocks 311 Capital Management Contract

Overview of the Conflict

The dispute centers on the procurement and legal validity of a contract awarded to 311 Capital Management. According to the reports, the "Invest in Oklahoma" initiative seeks to leverage state resources to drive economic growth and financial returns. However, Attorney General Drummond's office has stepped in to block the agreement, citing concerns that the contract does not meet the necessary legal standards or procurement regulations required for state-funded initiatives.

Key Details of the Dispute

- The Target Entity: 311 Capital Management, the firm slated to manage or facilitate the investment strategy.

- The Program: "Invest in Oklahoma," an initiative designed to attract investment and stimulate state economic development.

- The Legal Intervention: Attorney General Drummond has officially rejected the contract, preventing its implementation.

- The Executive Push: Governor Kevin Stitt has advocated for the contract as a means to advance the state's economic interests.

- Core Issues: The rejection stems from perceived failures in the contractual language and the process by which the firm was selected.

Analysis of the Legal Standoff

The role of the Attorney General in Oklahoma is to serve as the primary legal advisor to the state and its agencies. When a contract is submitted for review, the AG's office evaluates whether the agreement adheres to state law, protects the state from undue liability, and follows established procurement guidelines. The rejection of the 311 Capital Management contract suggests that the Attorney General found critical flaws that could potentially expose the state to legal or financial risk.

From the perspective of the Governor's office, the "Invest in Oklahoma" project is likely viewed as a strategic necessity to keep the state competitive. The administration's drive to implement this contract reflects a desire for rapid economic mobilization. However, the legal vetting process serves as a check and balance to ensure that such speed does not come at the cost of transparency or legality.

Implications for State Governance

This clash highlights a recurring tension between executive ambition and legal oversight. By rejecting the contract, AG Drummond has effectively halted the momentum of the "Invest in Oklahoma" initiative in its current form. For 311 Capital Management, the rejection means a complete stop to their projected role in the state's financial strategy until, or if, the contract is rewritten to satisfy legal requirements.

Furthermore, this situation raises questions regarding the transparency of the selection process for 311 Capital Management. If the contract was rejected based on procurement irregularities, it may indicate a need for a more rigorous and open bidding process to ensure that the state is receiving the best possible value and that the selection is free from undue influence.

Potential Next Steps

Moving forward, the Stitt administration faces a choice: they can attempt to renegotiate the terms of the contract with 311 Capital Management to address the AG's concerns, or they may be forced to seek an alternative partner for the "Invest in Oklahoma" initiative. If the administration chooses to challenge the AG's decision in court, the state could enter a prolonged period of litigation that would further delay the initiative.

Until a resolution is reached, the "Invest in Oklahoma" program remains in a state of limbo, serving as a reminder of the critical intersection between political goals and the rule of law within state government.

Read the Full The Oklahoman Article at:

https://www.oklahoman.com/story/news/politics/2026/05/06/invest-in-oklahoma-contract-311-capital-management-rejected-ag-drummond-governor-kevin-stitt/89964103007/

on: Tue, Apr 28th

by: Washington Examiner

Xavier Becerra: Leveraging Administrative Expertise for Governor

on: Sat, Apr 18th

by: Las Vegas Review-Journal

on: Sat, Apr 18th

by: Republican & Herald, Pottsville, Pa.

Legal Battle Over Schuylkill County EMA Director Appointment

on: Mon, May 04th

by: Hubert Carizone

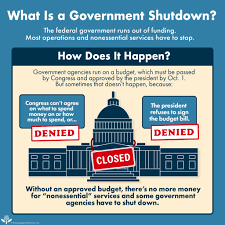

State Budget Deadlock: Systemic Dysfunction or Deliberate Negotiation?

on: Sun, Apr 19th

by: MSN

on: Fri, Apr 24th

by: AfroTech

DHHS Audit Uncovers Systemic Failures in Employee Termination Process

on: Mon, Apr 20th

by: Los Angeles Times

Bulgaria's Political Shift: A Mandable for Stability and Reform

on: Sun, Apr 19th

by: kcra.com

on: Sun, May 03rd

by: Orlando Sentinel

The End of Chevron Deference: A Redistribution of Federal Power

on: Tue, May 05th

by: Business Insider

on: Mon, May 04th

by: Hubert Carizone

on: Sun, Apr 19th

by: thedispatch.com